From the introduction to the domain partitioning we know that the domain is partitioned into 10 diamonds, that are each partitioned into a number of subdomains, that are each partitioned into cells that correspond to the elements of the finite element mesh.

Each subdomain is assigned a (globally) unique ID

Each subdomain is assigned to one of the parallel (MPI) processes. When running on multiple GPUs, each (MPI) process is exactly assigned to one GPU. Each process may carry more than one subdomain.

To organize the process local subdomains, each subdomain_id is mapped to a process-local local_subdomain_id, which is just an integer between 0 and the number of subdomains in the process.

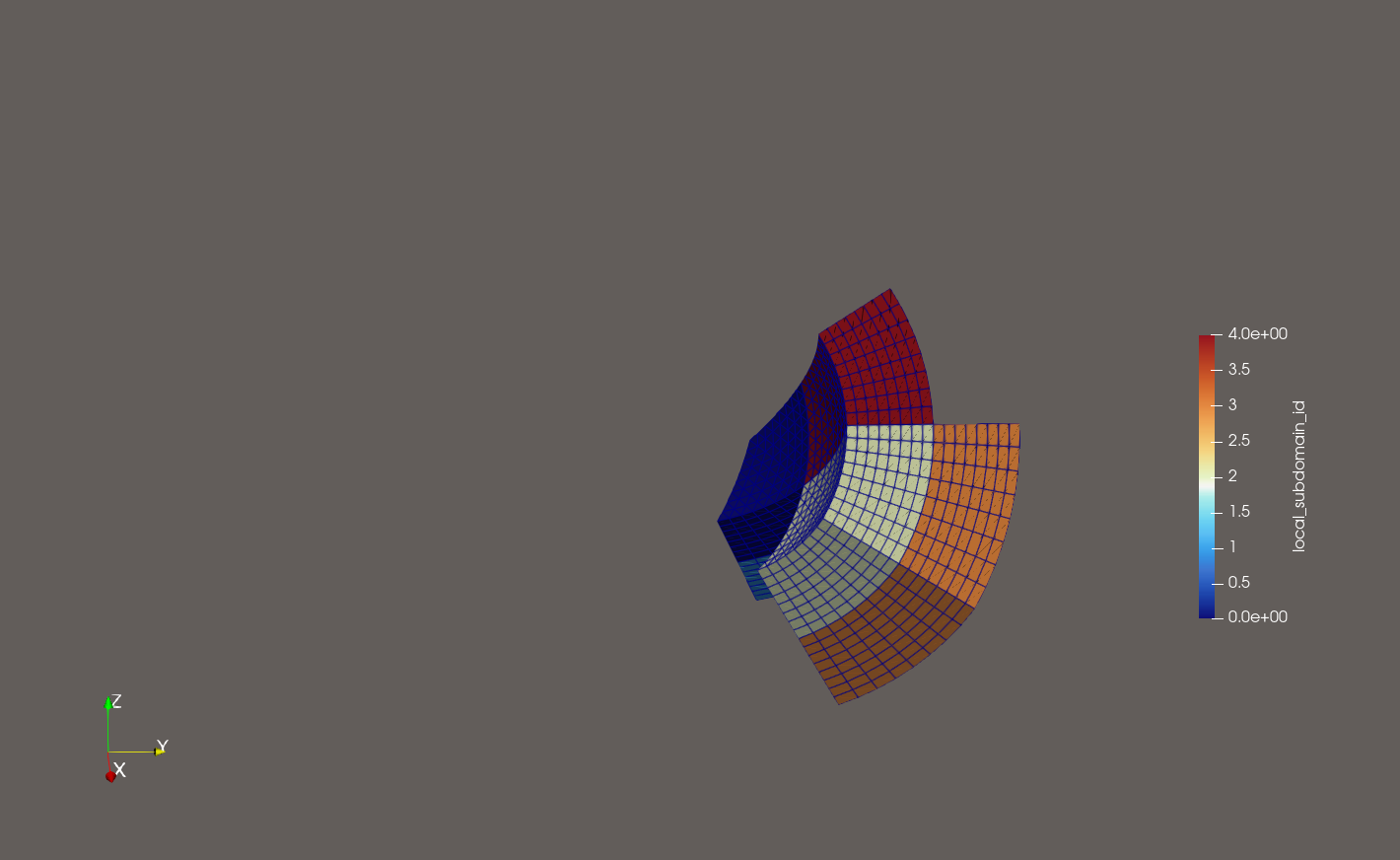

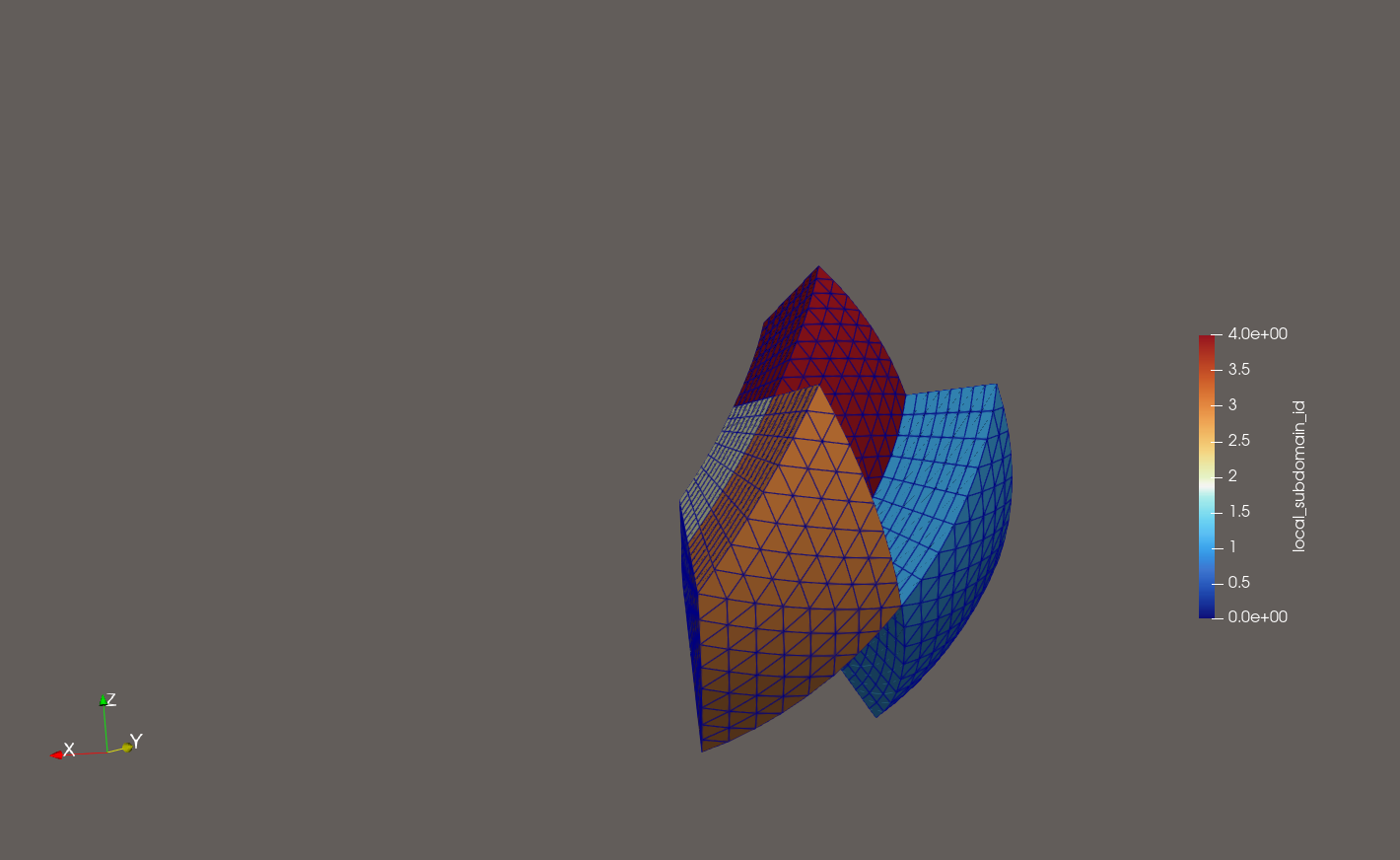

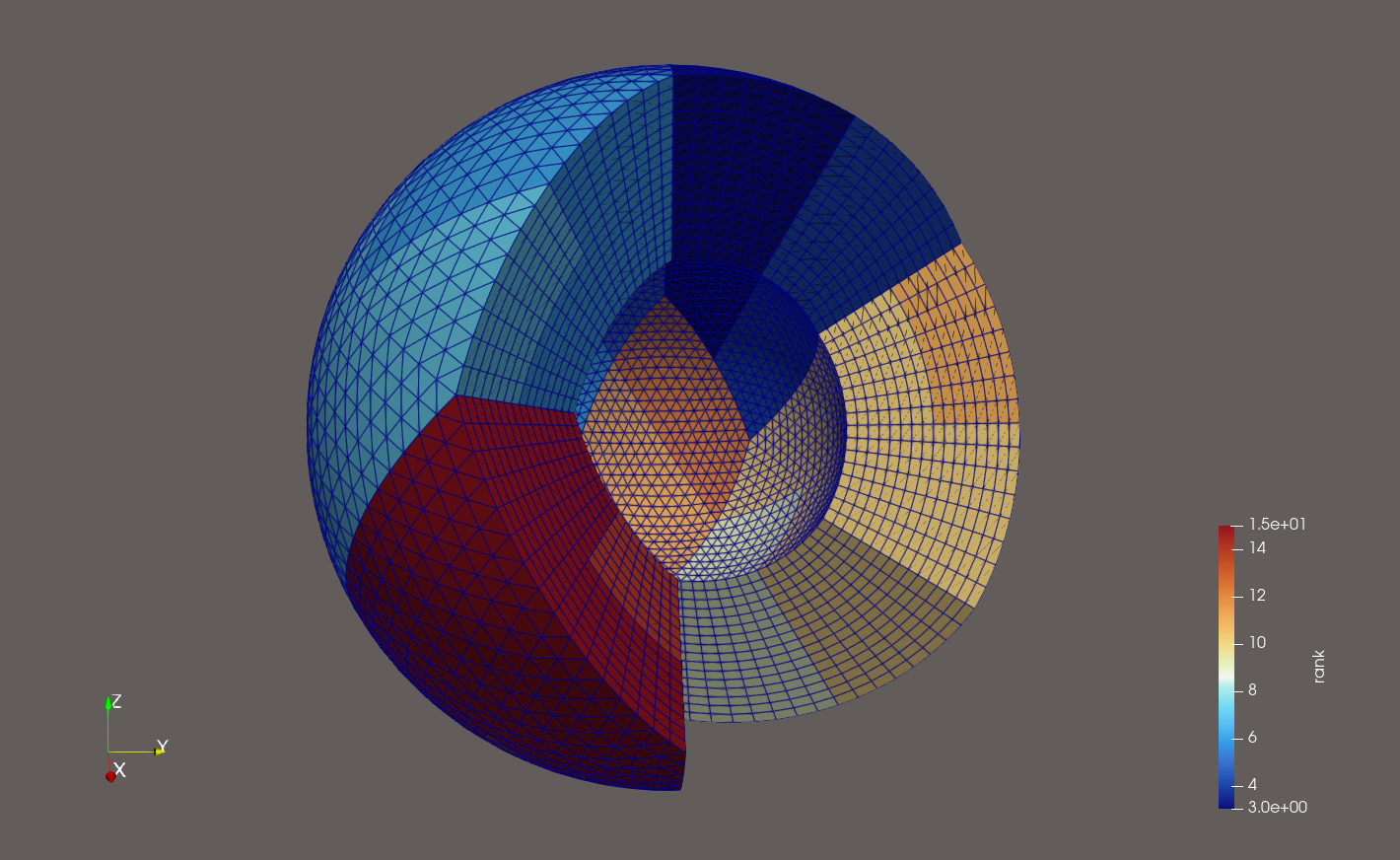

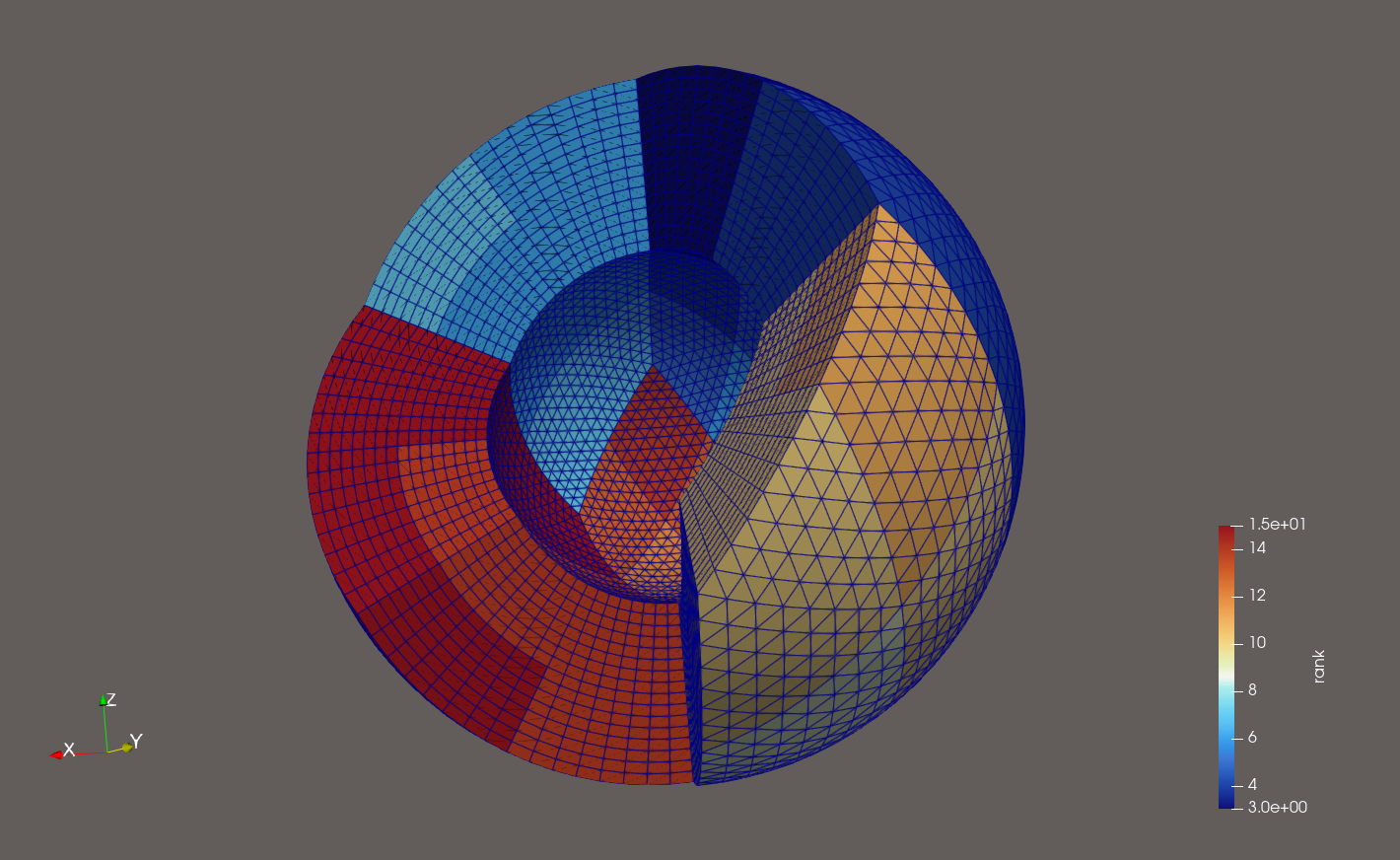

An example of a domain partitioning is shown in the figure below. Therein you see (from two angles) a domain partitioned into 8 subdomains per diamond (subdomain refinement level == 1). The diamond refinement level is 4 (thus we have 2^4 == 16 hex cells in each direction per diamond, and therefore 8 hex cells in each direction per subdomain). The domain is distributed among 16 processes.

Overall:

Subdomains colored by MPI rank (hiding diamonds 0, 1, and 5) - from 2 angles:

Subdomains of MPI rank 10 colored by local subdomain ID - from the same 2 angles: